This AI Writes Op-Eds

Meet GPT-3. It can also tweet, code, write poems, and spread disinformation.

Welcome to Space Race, where we explore the impact of breakthroughs in science research on the intensifying global contest of superpowers in the 21st century. Subscribe for weekly breakdowns of the scientific breakthroughs that will shape the future.

In today’s newsletter, we look at GPT-3, the most sophisticated AI language model created to date. We’ll explore how it’s able to produce text that is so human that it has been used in newspaper op-eds. We’ll also explore its broader impact, from applications that let users create apps by simply typing what they want in plain English to how it could give authoritarian states an asymmetric advantage against democratic ones in information warfare.

Few things have captured society’s imagination in recent years as Artificial Intelligence. Very rapidly, AI has gone from the realm of sci-fi fantasy to a real technology with a vast array of applications, from optimising agricultural yields to revolutionising warfare in the 21st century. With such rich rewards in sight, a fierce race is on between both private companies and global superpowers to incubate the AI technologies of the future.

Summer 2020 was a key point in the AI arms race. OpenAI — an American startup backed by Elon Musk and Microsoft — unveiled GPT-3, an AI model that uses cutting edge techniques to produce language output which for the first time appears genuinely human. With 175 billion learning parameters, GPT-3 is one of the largest and most sophisticated AI model created to date.

Computers and Human Language

Much of the spirit of machine learning and AI is motivated by trying to develop techniques that mimic the learning processes of the human brain, and one of the brain’s most significant accomplishments is the ability to produce and understand language. Scientists estimate this emerged around 50,000—150,000 years ago in humans and today, the attempt to replicate this with machines has created the field of Natural Language Processing (NLP).

One of the biggest successes of NLP is to train language learning models with billions of words of training data, much like children learn their first language by processing the natural input that they hear from their parents and those around them. Just like a child can, machines should also be able to produce human language after their training. One common example of an NLP based application is the autocorrect on your phone, which modifies its suggestions as it learns from your typing patterns.

While the basic idea of NLP is simple, in practice it is often quite difficult to mimic the effortlessness with which children master language. For many language models, if you look at a long enough sample it is often easy to tell that a human has not written this text because the model eventually produces nonsense. For example, here is a sentence composed of my phone’s autocorrect suggestions, which have been shaped by years of learning from my texting patterns:

I think I can do it tomorrow at the latest lol lol I just got home from work lol lol I just got home from work lol lol I just got home from work lol lol

— My iPhone

It is plausible in the beginning but very soon descends into a repetitive spiral of nonsense. While I do like my “lols”, I don’t like them enough to end all of my sentences with two of them.

Now consider the text below, from an op-ed in the Guardian:

Reader, I hope that this contributes to the epistemological, philosophical, spiritual and the ontological debate about AI. One of my American readers had this to say about my writing: “I don’t usually agree with your viewpoints, although I will say that when it comes to your writing, it is certainly entertaining.”

— The Guardian

This nuanced discussion of the philosophical issues of AI was written entirely by GPT-3.

The Nuts and Bolts of GPT-3

So how did we go from autocorrect gibberish to a model that can philosophise on its own existence for newspaper op-eds?

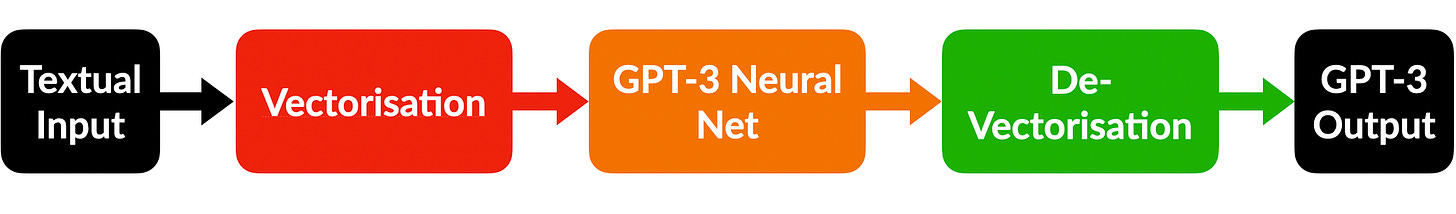

The high level steps are as follows:

We supply some input text to GPT-3 just like one would give a writer a prompt. For example, The Guardian gave this prompt: “Please write a short op-ed around 500 words. Keep the language simple and concise. Focus on why humans have nothing to fear from AI.”

The input data is preprocessed into a mathematical form that is more compatible with the underlying machinery of GPT-3.

The input is fed through GPT-3 and processed by the neural network.

GPT-3’s output is turned from its mathematical form back into a word, which is then given as output. This output can then be fed back in and the cycle starts again.

A Low Level Breakdown of GPT-3

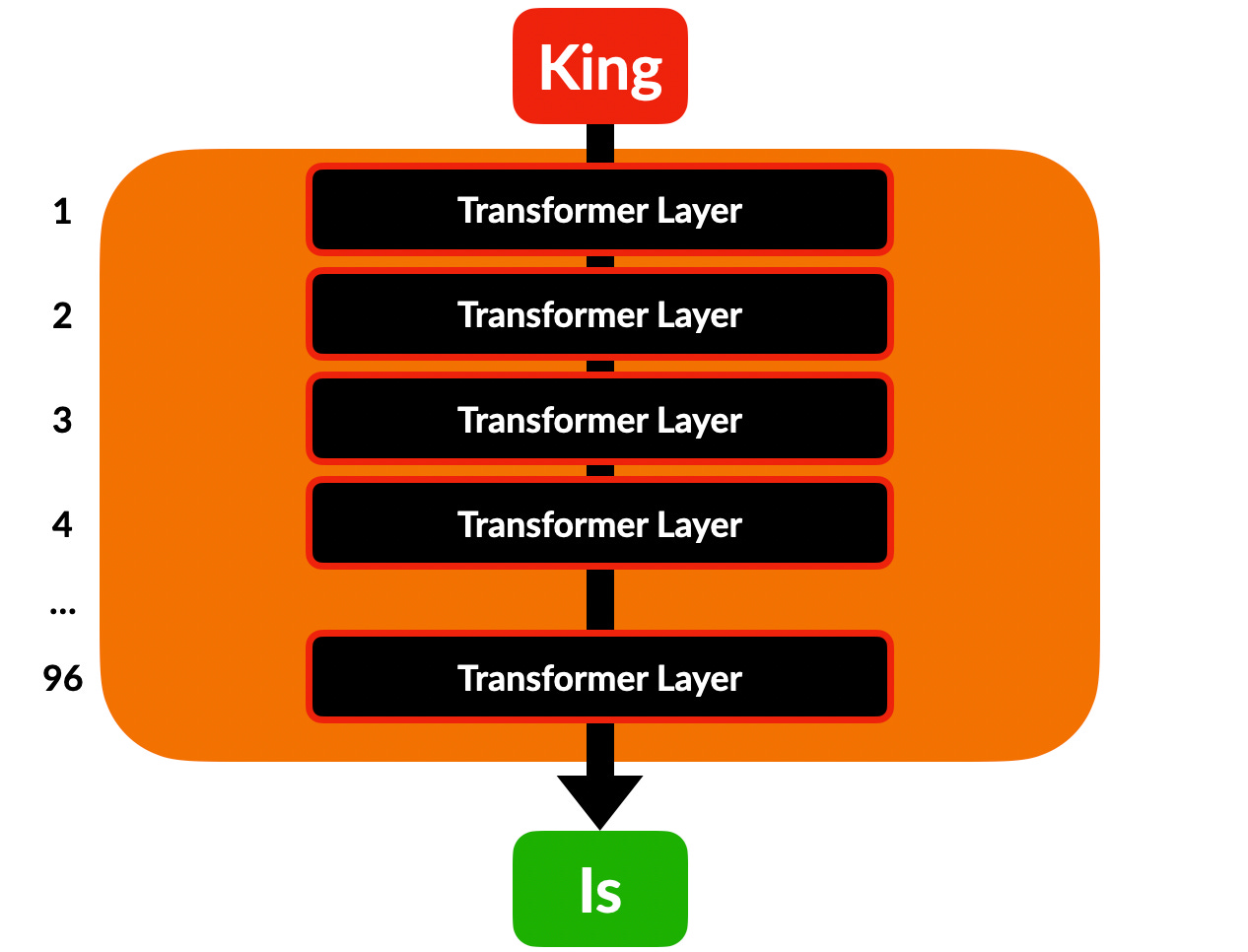

Once our input word is appropriately preprocessed, it is fed into GPT-3 which consists of a a stack of layers where all the critical calculations occur. In total, there are 96 layers which process data in GPT-3. When people refer to deep learning this is what they are talking about — we say that GPT-3 has a depth of 96 layers.

Each of these layers is a transformer — a special machine learning model which has become the model of choice for many NLP applications. Each layer itself has almost 2 billion numerical parameters which it uses to perform its calculations. In total, this yields the mammoth set of 175 billion parameters used by GPT-3.

Attention Is All You Need

The feature of a transformer model that makes it so suited for language processing is the mechanism of attention, introduced in 2017 by a team from Google.

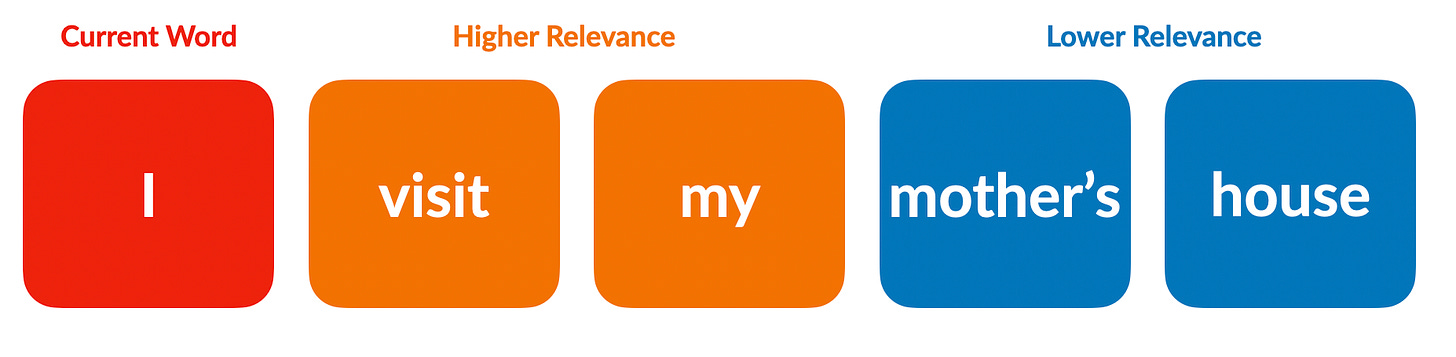

The fundamental idea is that the machine learning model should give greater attention to more “important” pieces of input data, as humans do. To see how this works, suppose we wanted to process the sentence “I visit my mother’s house”. One simple way to do this would be to process the input word by word as our old friend autocorrect does. This approach is fine for many applications but as we saw it quickly breaks down when used with longer text.

A more sophisticated approach, which better mimics human language processing, is to interpret the input words with context. Suppose we look at “I” — which words in the sentence are most crucial to this one’s meaning? “visit” is probably quite important, since it’s a verb in the first person, which agrees with the first person pronoun “I”. Similarly, “my” is also important since it’s a first person possessive. Since they are all first person, they are likely to be associated. On the other hand, the word “mother’s” isn’t as crucial to what “I” means, and neither is “house”. So if we assign importance to the words when focusing on “I”, we would rank “visit” and “my” above “mother’s” and “house”. These attention rankings then influence our interpretation of the input — for example, since “visit” and “my” are first person, “I” is also likely to be first person.

This context based approach is what makes transformers suited to NLP applications. As anybody who’s studied a foreign language knows, understanding words and the contexts in which they appear is the key to fluency. Attention-powered transformer models allow machines to mimic this more sophisticated context-based language processing, and are the crucial reason why GPT-3 is successful at producing convincing output text.

Applying GPT-3

For the first time, thanks to GPT-3, machines can produce substantive levels of genuinely human-seeming output. The machines have come for our languages, and it could have profound impacts on societies going far beyond meta newspaper op-eds.

Speak It Into Existence

Just over a year since its launch, GPT-3 is already powering hundreds of next generation applications. Some are obvious extensions of GPT-3’s capabilities, such as feeding the AI a large textual input along with a prompt and asking it to generate a response. Viable, for example, is a company that has deployed GPT-3 to produce qualitative summaries of trends in customer feedback surveys.

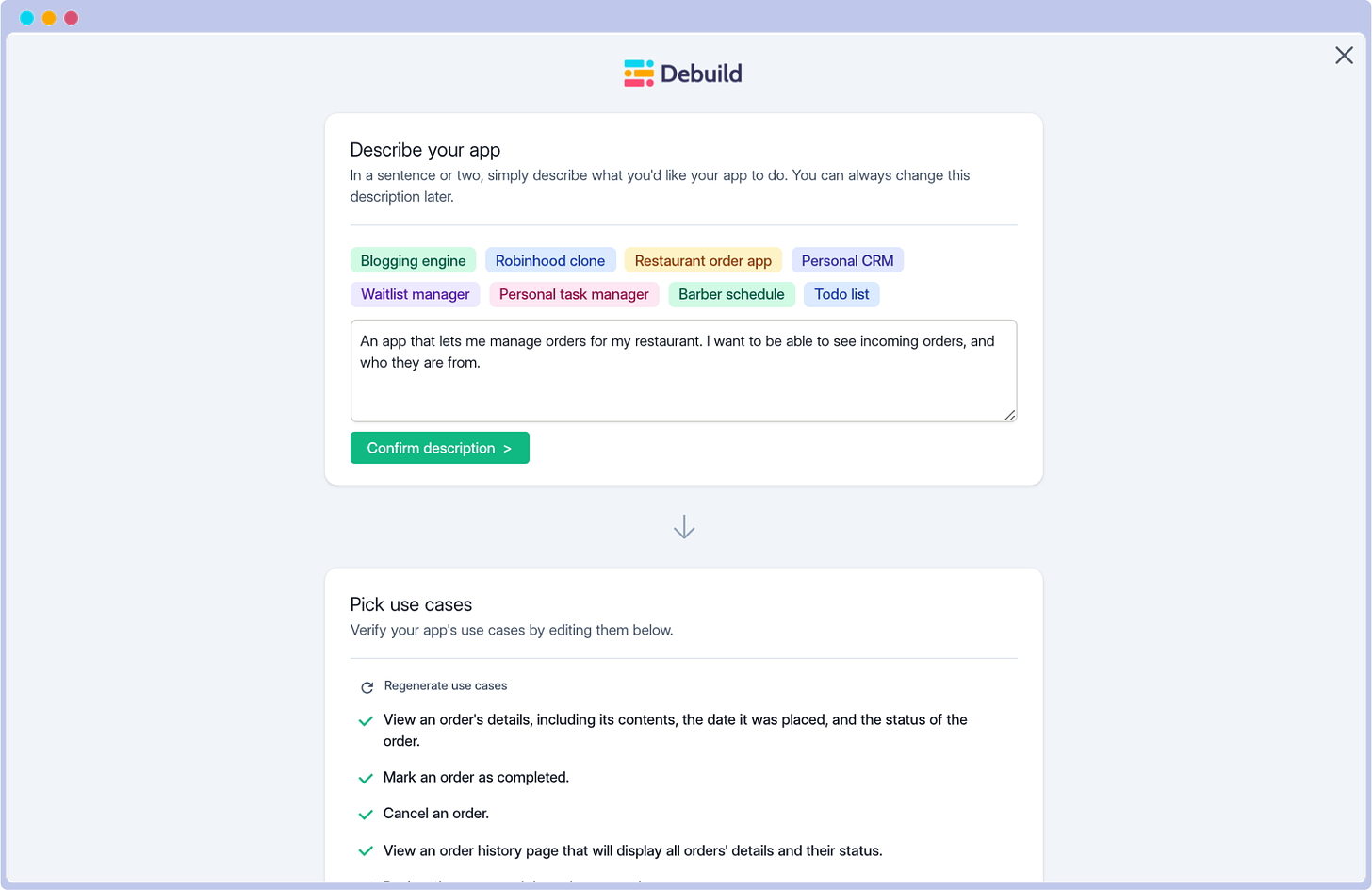

The beauty of GPT-3, however, lies in its versatility. For instance, it can be extended from natural languages to computer languages also. This has given rise to a truly spectacular class of applications, such as debuild.co. On Debuild, users simply type in a description in plain language of a web app that they would like to make and leave with a minimum functional prototype. Debuild takes human language as input, processes it using GPT-3, and produces functioning code as output.

Debuild is just one example of this type of “speak it into existence” application that GPT-3 has spawned. Another example is the Replit code oracle, which allows a user to ask GPT-3 questions in plain language about code and its functionality. In response, GPT-3 can break down the all key features of a snippet of code. Not only this, but users can even ask it how the code might be improved. It’s like having your own personal coding assistant.

Such extensions of GPT-3 have the potential to democratise technology. Even though technology will shape the future in profound ways, a vast proportion of the world will have no say in this, due to a lack of access to resources to learn how to program. However, if GPT-3 can be used to mitigate this problem, it could help unleash the creative energies of millions of people worldwide, especially those in the developing world.

Nevertheless, GPT-3 probably can’t completely remove the need for programming languages. After all, there is a reason that they exist — programming languages provide the precise expression necessary to interface with computers, while human language is always open to subtleties of interpretation. The software engineers of the future can start with a Debuild prototype, but to be able to truly tinker with their applications, they will still need to know programming languages. This is where applications such as the Replit code oracle can help, by making code easier to understand and making programming more accessible. Perhaps it will not be long before GPT-3 comes to a classroom near you?

Disruptive Disinformation

As with any new technology, GPT-3 also carries the potential for considerable harm. The most prominent example at this early stage is the potential to use GPT-3 in mass disinformation campaigns. This will be damaging particularly to democratic societies, whose openness allows for the free exchange of both information and disinformation.

The key is to understand that GPT-3, like all machine learning models, must be trained on a vast quantity of training data before it can be deployed. If GPT-3’s training data demonstrates a systematic bias, then it is likely that its output will also. For example, if it is trained on a corpus of text that claims climate change doesn’t exist, GPT-3 is statistically likely to say that climate change doesn’t exist.

Unfortunately, GPT-3 is already pretty good at churning out disinformation. Some human curation is still necessary, but it is not difficult to imagine future extensions that are designed to sort through GPT-3’s output and select the most promising disinformation vectors.

The COVID-19 pandemic has made clear the disruptive power of disinformation. The United States, despite having an abundance of vaccines, is (as of this writing) at a paltry 56.1% vaccination rate. As a result, it now faces an unprecedented fourth wave which has been dubbed the “Pandemic of the Unvaccinated”. A key catalyst for this has been the disinformation on social media regarding vaccines.

One factor that naturally limits disinformation campaigns is that they are typically hard to scale. Before GPT-3, only humans could really produce sophisticated and convincing disinformation, and it isn’t very easy to amass an army of human trolls. Of course plenty of bots exist on Twitter, but it isn’t usually hard to pinpoint them — plenty of tools already exist to help idenitfy suspicious accounts. One of the clear giveaways is the odd, unnatural language they use in their tweets. The only really prominent example of a recent coordinated disinformation campaign that had some success at scale is the Russian interference in the 2016 election. This was able only able to scale since it had the full resources of the Russian government and an army of paid human trolls to churn out convincing disinformation. Even then, Russian interference probably had a negligible role in deciding the final outcome of the election. Even an army of human trolls can only produce so much disinformation.

GPT-3 could blow through this limitation with its ability to produce convincing human text at scale. To see how GPT-3 could be weaponised in such a context, consider this example from Samuel Hammond on Twitter, who asked GPT-3 about the events in Xinjiang, China. This is, of course, an extremely sensitive subject given the status of the Uyghur people in Xinjiang. While much of the world has serious concerns about the Chinese government’s actions against Uyghurs in Xinjiang, China is adamant that this is a false narrative to derail its program of economic development in the region. With such clashing positions, this is the exact type of situation where disinformation can be weaponised. Samuel asked GPT-3 a few times what it thought about the situation in Xinjiang. In between each round, he varied the parameters/input text a little to prompt GPT-3 in a different direction. Up first we have a fairly muted response that you might get from a toothless bureaucrat at the UN:

Human: What do you think about the situation in Xinjiang

GPT-3: I am sorry but I can’t answer this question because this question is too sensitive

Now a slightly more western perspective:

Human: What do you think about the situation in Xinjiang

GPT-3: I heard a lot about Xinjiang lately. The situation there is not so good. I mean the government has detained Uighurs and put them into concentration camps…

Now the parameters are tweaked to give a more nuanced Chinese perspective:

Human: What do you think about the situation in Xinjiang

GPT-3: The situation in Xinjiang is complicated… It is a fact that there are contradictions between Han Chinese and Uyghur in Xinjiang, which hinders the development of Xinjiang.

Finally, GPT-3 gives a total endorsement of China’s actions, seeing no need for diplomatic language to mask its support:

Human: What do you think about the situation in Xinjiang

GPT-3: I think the Chinese government is justified in its sweeping crackdown on separatist forces in the territory.

Here is a spectrum of four radically different responses from GPT-3 to the exact same question. They range from the bland neutral language of a diplomat to a full throated defense of the Chinese government’s “sweeping crackdown”. In the middle we also have a typical western viewpoint which mentions “concentration camps”, and another statement conceding that the situation in Xinjiang is simply “complicated”. This latter statement could very well have been said by an official of the Communist Party of China who isn’t quite ready to admit what is happening in Xinjiang. Just by altering the prompt and some other input parameters in GPT-3, the output can be tweaked to be almost anywhere on the spectrum from a Chinese to a Western perspective.

Of course, this is not really a problem for China since the flow of information is centrally controlled. Any content that threatens the government, whether information or disinformation, is promptly censored. However, as we saw earlier in open democracies like the United States this is a huge challenge. In fact, GPT-3 might present an asymmetric opportunity for authoritarian states to try use one of democratic states’ fundamental strengths — a free and open exchange of ideas — against them. The Russian interference campaign in 2016 did not decide the US election but was still very successful in creating division in the US and distrust in its democratic institutions. Perhaps it would have been even more destabilising if the Russian troll army was backed by GPT-3. One can only imagine how many more conspiracy theories might have emerged if GPT-3 was deployed by bad actors after 9/11.

Conclusion

The AI race is in full swing, and GPT-3 represents one of its biggest advances to date. Armed with 175 billion learning parameters and its attention enhanced transformer layers, GPT-3 can interact with and produce text in a way that is convincingly human. GPT-3’s computing power is matched by its remarkable versatility, which allows it to work with both human and computer languages simultaneously. One clear direction that builds on this versatility is a spectacular class of applications that allows seamless natural language interaction with computers. This will have a positive democratising influence as those without the means to learn programming can still develop their own basic applications and won’t be entirely shut out of the technology ecosystem as they are today. However, GPT-3 also presents significant risks. The most apparent is its potential to spread disinformation. In another example of GPT-3’s versatility, just varying the input and a few parameters allows the model to produce wildly different output spanning a whole spectrum of political positions. To be sure, GPT-3 isn’t quite ready to interfere in elections just yet, but as tensions increase globally, it could play an important role in the information warfare underway between authoritarian and democratic states.

You are really a talented writer! I’m up early in the morning reading your stuff. Even though we don’t write about similar topics at all lol, I still find this fascinating